Acknowledgements & References

Special thanks to the following mods for providing valuable references and technical inspiration during development:

RimTalk - Dialogue system reference

RimTalk-ExpandActions - Action extension reference

RimTalk-ExpandMemory - Memory expansion reference

RimChat - Dialogue system reference

NewRatkin - Race mod architecture reference

VanillaExpandedFramework - Framework design reference

RimMind - Core

The core infrastructure of the RimMind suite. It provides LLM client, asynchronous request queue and game context builder, serving as the mandatory prerequisite dependency for all RimMind sub-modules.

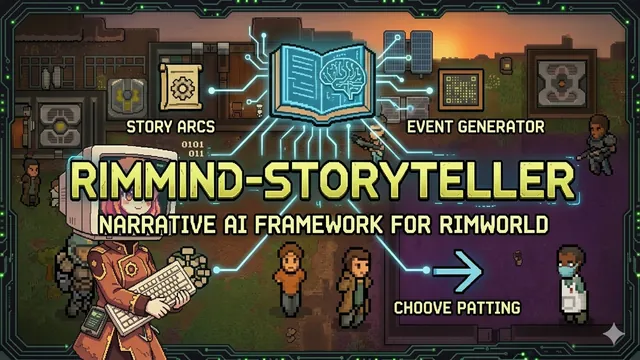

What Is RimMind

RimMind is an AI-powered RimWorld mod suite. Integrated with Large Language Models (LLM), it endows colonists with unique personalities, memories, dialogues and autonomous decision-making capabilities.

Sub-mod List & Dependency Relationships

[td><b>Mod</b></td><td><b>Responsibility</b></td><td><b>Dependencies</b></td><td><b>GitHub</b></td></tr><tr><td><b>RimMind-Core</b></td><td>API client, request scheduling, context packaging</td><td>Harmony</td><td><url>https://github.com/RimWorld-RimMind-Mod/RimWorld-RimMind-Mod-Core</url>Link</url></td></tr><tr><td>RimMind-Actions</td><td>AI pawn action execution library</td><td>Core</td><td><url>https://github.com/RimWorld-RimMind-Mod/RimWorld-RimMind-Mod-Actions</url>Link</url></td></tr><tr><td>RimMind-Advisor</td><td>AI generates work decisions for pawns</td><td>Core, Actions</td><td><url>https://github.com/RimWorld-RimMind-Mod/RimWorld-RimMind-Mod-Advisor</url>Link</url></td></tr><tr><td>RimMind-Dialogue</td><td>AI-driven dialogue system</td><td>Core</td><td><url>https://github.com/RimWorld-RimMind-Mod/RimWorld-RimMind-Mod-Dialogue</url>Link</url></td></tr><tr><td>RimMind-Memory</td><td>Memory collection and context injection</td><td>Core</td><td><url>https://github.com/RimWorld-RimMind-Mod/RimWorld-RimMind-Mod-Memory</url>Link</url></td></tr><tr><td>RimMind-Personality</td><td>AI-generated personalities and inner thoughts</td><td>Core</td><td><url>https://github.com/RimWorld-RimMind-Mod/RimWorld-RimMind-Mod-Personality</url>Link</url></td></tr><tr><td>RimMind-Storyteller</td><td>AI storyteller for intelligent event selection</td><td>Core</td><td><url>https://github.com/RimWorld-RimMind-Mod/RimWorld-RimMind-Mod-Storyteller</url>Link</url></td></tr></table>

Core ── Actions ── Advisor

├── Dialogue

├── Memory

├── Personality

└── Storyteller

Core Features

<b>Intelligent Context Construction</b> - Automatically collects game status data: pawn mood, health, skills, equipment, colony wealth, threat level, seasons and weather, providing complete information for AI decision-making.<b>Asynchronous Request Queue</b> - Efficiently manages LLM requests without blocking the main game thread, supporting request priority, cooldown control, automatic retries, pause and cancellation.<b>Modular API Architecture</b> - All RimMind components call APIs through unified interfaces, independent of underlying implementation logic.<b>Multi-Provider Support</b> - Compatible with OpenAI Chat Completion format APIs including OpenAI, DeepSeek and Ollama, as well as Player2 services (local application + remote API).<b>Context Filter</b> - Over 28 configurable options to precisely control prompt content and reduce token usage.<b>SkipCheck Mutual Exclusion Mechanism</b> - Sub-modules can register skip rules for dialogues, floating menus, actions and story events, achieving cross-mod mutual exclusion control.<b>Debug Toolkit</b> - Built-in AI request log window to view full input and output of each AI call in real time for troubleshooting.

Technical Highlights

- Compatible with multiple AI services: OpenAI, DeepSeek, Ollama, Player2

- Configurable context filters with Minimal / Standard / Full three presets

- Native JSON mode support to ensure structured AI responses

- Request status floating window for real-time AI task monitoring

- Automatic retry for transient errors: timeout, 429, 502, 503

- Serial processing for local models to avoid resource contention

- Prompt token budget management with automatic compression and truncation

Supported API Endpoints

[td><b>Service</b></td><td><b>Endpoint</b></td><td><b>Description</b></td></tr><tr><td>OpenAI</td><td>https://api.openai.com/v1</td><td>Models such as GPT-4o-mini</td></tr><tr><td>DeepSeek</td><td>https://api.deepseek.com/v1</td><td>Models such as deepseek-chat</td></tr><tr><td>Ollama (Local)</td><td>http://localhost:11434/v1</td><td>Self-hosted local language models</td></tr><tr><td>Player2</td><td>Auto-detected / Manually configured</td><td>Player2 local client or remote API</td></tr><tr><td>Others</td><td>Custom Base URL</td><td>Any OpenAI-compatible API interface</td></tr></table>

Core Functions

<b>LLM Client</b>Fully compatible with all OpenAI Chat Completions format APIs: OpenAI, DeepSeek, local Ollama. Also supports Player2 services with automatic local app detection and remote API access. Forced JSON mode (response_format: json_object) is available and can be disabled for local models.<b>Asynchronous Request Queue</b>All AI requests run on background threads and never block the main game thread. Each request has independent cooldown, and expired requests are discarded automatically. Supports automatic retry for transient errors. Local model requests are processed serially to prevent resource conflicts.<b>Context Generation</b>Automatically collects game status and packages text data for all modules:[list]

[*] <b>World Context</b>: Time, pawn list, food supply, threats, seasons, weather

[*] <b>Pawn Context</b>: Age, backstory, mood, health, skills, gear, job assignments, relationships

[/list]

<b>Context Filtering</b>Precisely control which game information is inserted into prompts via context filter settings to save tokens. Three presets (Minimal / Standard / Full) are provided, plus over 28 customizable toggle options.<b>Debug Tools</b>[list]

[*] <b>AI Debug Log</b>: Floating panel showing full Prompt and Response of every AI interaction

[*] <b>Request Floating Window</b>: Real-time AI request status displayed at bottom-right corner

[*] <b>Developer Menu</b>: Test connections, view context data, reset cooldowns, pause/resume request queues

[/list]

Frequently Asked Questions

<b>Q: Which large language models are supported?</b>A: Any model compliant with OpenAI Chat Completions API standard, including