Description:

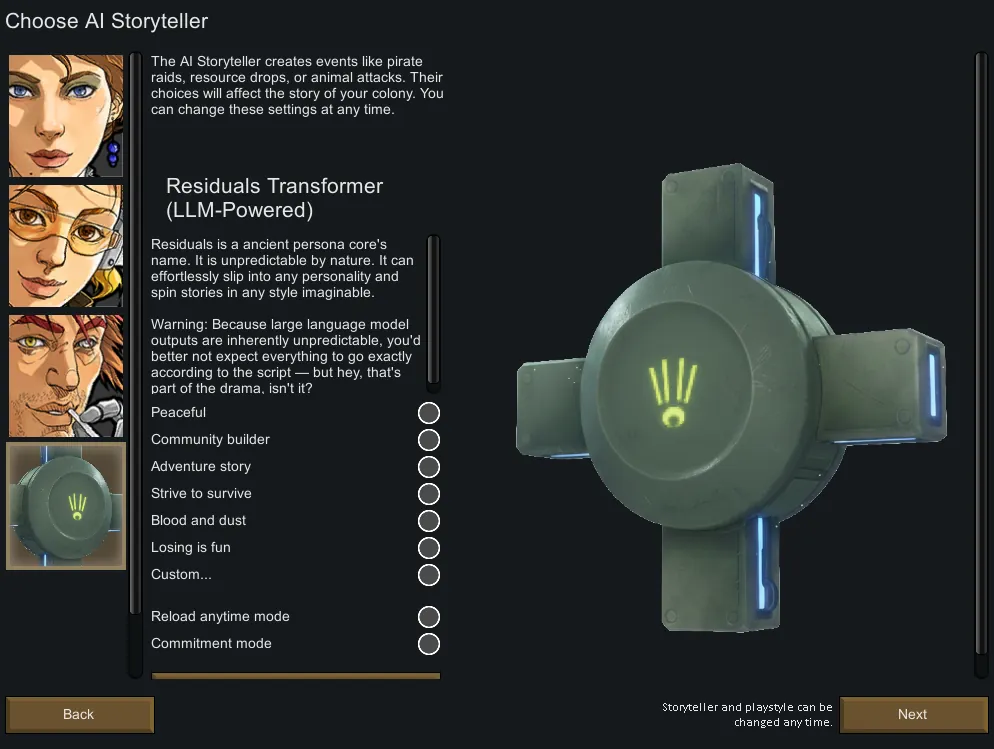

This mod adds an AI-driven (large language model) storyteller called “Residuals.” It purposefully generates events based on the current state of the colony, its development trends, and historical records, driving the colony’s narrative forward according to a chosen style.

An API connection is required for proper operation.

Fully customizable — you can modify the prompt in the mod settings to adjust the storyteller’s style. From a cozy, idyllic pastoral life to a blood-soaked battlefield of steel and fire, it’s entirely up to you.

If you come up with interesting prompts, feel free to share them in the discussion!

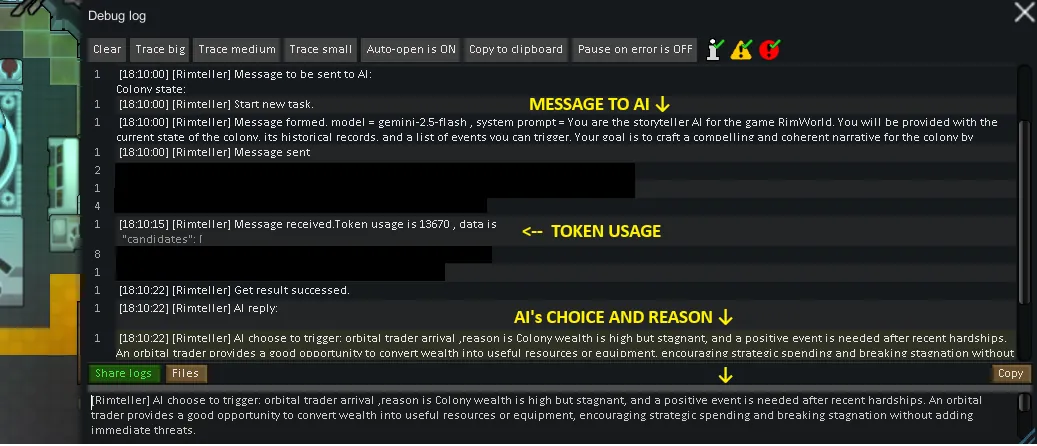

Overview of How It Works:

- Summarize the colony’s recent status at event checkpoints;

- Send the summary, prompts, and all triggerable events to the large language model;

- The large language model returns the name of the event to trigger and the reasoning;

- Trigger the event.

Events with their own internal logic (such as special events, diseases, some raids, and some quests) do not go through the AI and instead use the vanilla triggering logic directly.

For more infomation, please refer: What Is Sent to the AI, and What Does It Do?

API Usage Guide:

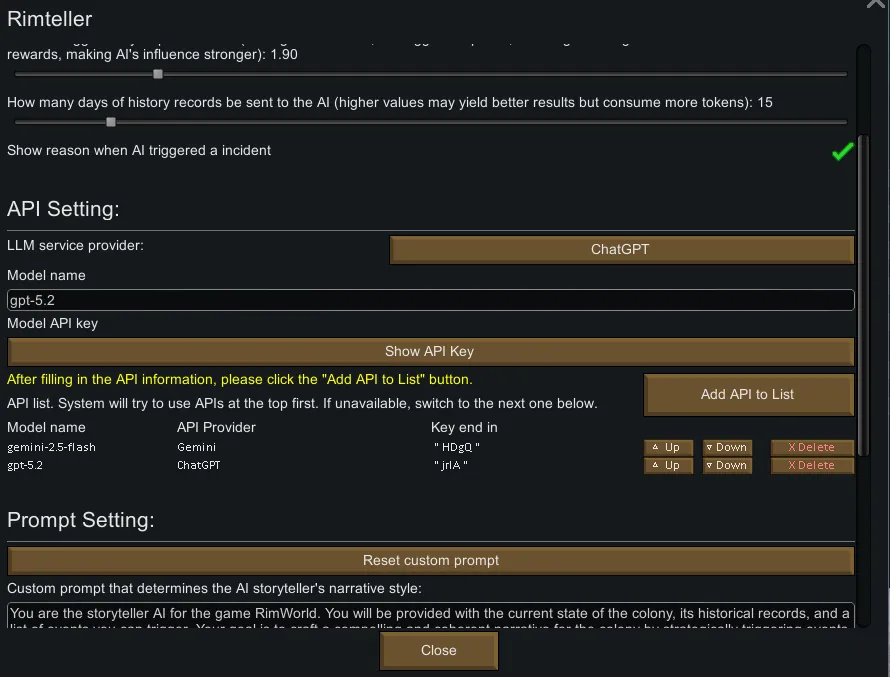

- ChatGPT, Deepseek, and Gemini are supported directly. Simply paste the API key and model name in the settings.

- Other providers compatible with the OpenAI Completions API are also supported (most are compatible). An additional model endpoint URL is required.

- How to obtain an API key: Visit the corresponding provider’s website and follow their application instructions.

- How to obtain the model name:

[list] - ChatGPT: Go to the ChatGPT API website[platform.openai.com], select a suitable model, open its page, scroll down to the “Snapshots” section, and copy the model name on the right (e.g., “gpt-5-mini”).

- Gemini: Go to the Gemini API website[ai.google.dev], select a suitable model, and copy the “Model code” (e.g., “gemini-2.5-flash”).

- Deepseek: Go to the Deepseek API website[api-docs.deepseek.com] and copy the “MODEL” entry from the table (e.g., “deepseek-chat”).

[*] For other providers, please refer to their websites for model names and endpoint URLs.

[/list]

Settings Guide:

- Slider 1: Event frequency multiplier. Higher values increase how often the AI generates events, and also increase token consumption over the same in-game time. If set too high, a new event may trigger before the previous one finishes generating, causing failures.

- Slider 2: Event points multiplier. Higher values increase the points of AI-generated events (such as raids and quests), resulting in a greater impact on the story.

- Slider 3: History length. Determines how much of the colony’s history is sent to the AI. This is one of the main sources of token usage; higher values may produce more accurate events.

Performance Impact & Token Usage:

No performance impact. All requests are asynchronous and will not cause stuttering or delays.

With ~700 mods and default settings, in the late game each generated event consumes approximately 7k–13k tokens, most of which are lower-cost input tokens. Given the low frequency of in-game events (average time between events is 1.35 in-game days), a single save file (calculated over 5 in-game years) consumes only about 2M tokens in total.

Estimated costs: GPT-5.2 under $3.5, Gemini 2.5 Pro under $2.5, and the Deepseek series under 4 RMB (about $0.6). With Gemini 2.5 Pro’s free quota (20 calls per day), it can support roughly half a year of in-game time.

Now support auto switch APIs.

Compatibility & Save Safety:

- Automatically retrieves the full list of currently triggerable events, and is compatible with mods that add new events.

- Tested in saves with over 700 mods, with no incompatibilities found.

- The AI is only responsible for “choosing which event to generate.” Event points and frequency are still determined by the game itself and the multipliers in the settings, preventing save-breaking situations like “10,000-point raids at game start.”

- If AI event generation fails, Randy will step in and generate a random event.

Note: It is recommended to use large, high-quality models — severe hallucinations can genuinely disrupt the story pacing.

Q&A

- Event generation keeps failing and Randy always takes over?

[list] - Check whether the model name, URL, and API key are correct;

- Check your network connection;

- Press “~” to open the log, find yellow warnings starting with “[Rimteller],” and check the error details.

[*] I can’t understand why the AI triggered the current event.

Press “~” to open the log, find the white message starting with “[Rimteller] AI choose to trigger:”, and read the AI’s reasoning.

[*] Why is this marked as “Experimental”?

Because this mod allows AI to directly and significantly affect your gameplay experience… which is quite radical.

[*] Who are the three people on the cover?

They are the starting pawns from a test save. To test the narrative quality of AI-generated quests, they went through many strange and unusual experiences.

[/list]

Future Plans:

- Replace the storyteller portrait (continue asking nano banana). Feel free to express any suggestions!

- Bug fixes (if any) and QOL improvements

- Add automatic API switching Done

- Other new features — after automatic API switching is completed

Suggestions are welcome!